ByteDance recently unveiled its latest AI project, "DreamActor-M1," a groundbreaking achievement in video generation technology. This model seamlessly integrates a static photograph with a reference action video, replacing the photo's subject into the video scene to generate dynamic imagery with refined expressions, natural movements, and high-definition quality. This launch not only marks another breakthrough for ByteDance in generative AI but also poses a significant challenge to existing animation generation tools like Runway's Act-One.

DreamActor-M1's core innovation lies in its precise control and consistent performance of details. Traditional image-to-video generation methods often face challenges such as lifeless expressions, unnatural transitions, and inconsistencies in longer videos. DreamActor-M1, through advanced algorithm design, overcomes these hurdles. From the subtle curve of a smile, the natural rhythm of a blink, to the tremor of a lip, the model achieves stunning realism. It simultaneously controls body movements – turning the head, raising a hand, or even dancing – ensuring overall coordination and fluidity.

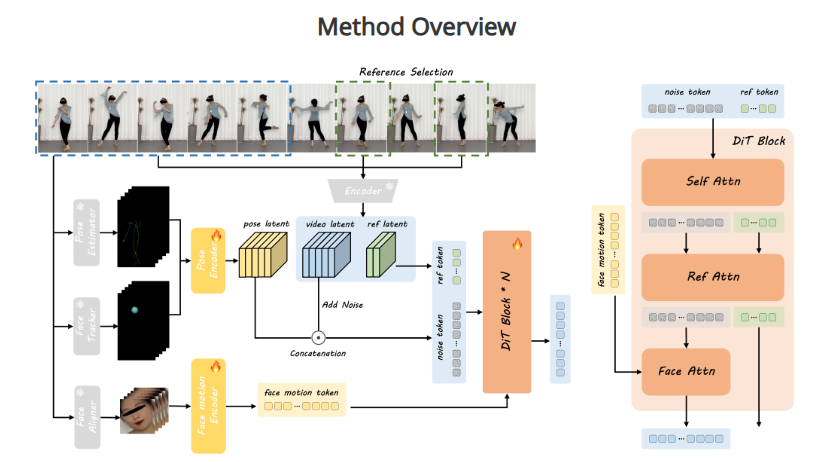

Technical analysis suggests this achievement leverages ByteDance's extensive expertise in deep learning and video processing. DreamActor-M1 not only captures movement patterns from the reference video but seamlessly merges them with the subject's features from the static photo. The result retains the original identity while avoiding common distortions or unnatural movements. This high-fidelity output achieves high-definition quality, providing a near-real filming experience.

Industry experts point out that DreamActor-M1 fills a significant gap in AI video generation. Compared to existing technologies like Runway's Act-One, it excels in fine-grained control (like micro-expression reproduction) and multi-dimensional movement synchronization (coordination between facial and body movements). This makes it promising for various applications. For example, in filmmaking, directors can quickly generate dynamic character performances from a single photo; on social media, users can transform their photos into lively animations; and in education or virtual reality, it can support immersive content creation.

However, DreamActor-M1's launch also sparks deeper reflections on its application. Its highly realistic generation capabilities could revolutionize digital content creation but may also intensify discussions around deepfakes and privacy. ByteDance hasn't disclosed the model's training data or commercialization plans, but there's widespread anticipation for more details to balance technological innovation with ethical considerations.

As the parent company of TikTok, ByteDance's AI investments are deepening. From image generation to video animation, its R&D is increasingly moving towards more complex multimodal directions. The release of DreamActor-M1 is not only a testament to its technological prowess but also a significant step in the global AI race. As the model is further refined and promoted, it's poised to redefine video content creation, bringing users and industries more surprises and possibilities.

Project Address: https://grisoon.github.io/DreamActor-M1/