GEO Brand Visibility

All-in-One GEO Brand Insights Platform

AI Visibility Audit

Quickly check how your brand is perceived and presented in AI-powered search results.

AI Search Visibility Checker

Detect brand's visibility on AI platforms

GEO Promotion Link Detection

Quickly evaluate the citation of promotion articles on AI platforms

MCP Servers

Discover Popular AI-MCP Services - Find Your Perfect Match Instantly

MCP Client

Easy MCP Client Integration - Access Powerful AI Capabilities

MCP Case Tutorials

Master MCP Usage - From Beginner to Expert

MCP Ranking

Top MCP Service Performance Rankings - Find Your Best Choice

MCP Service Submission

Publish & Promote Your MCP Services

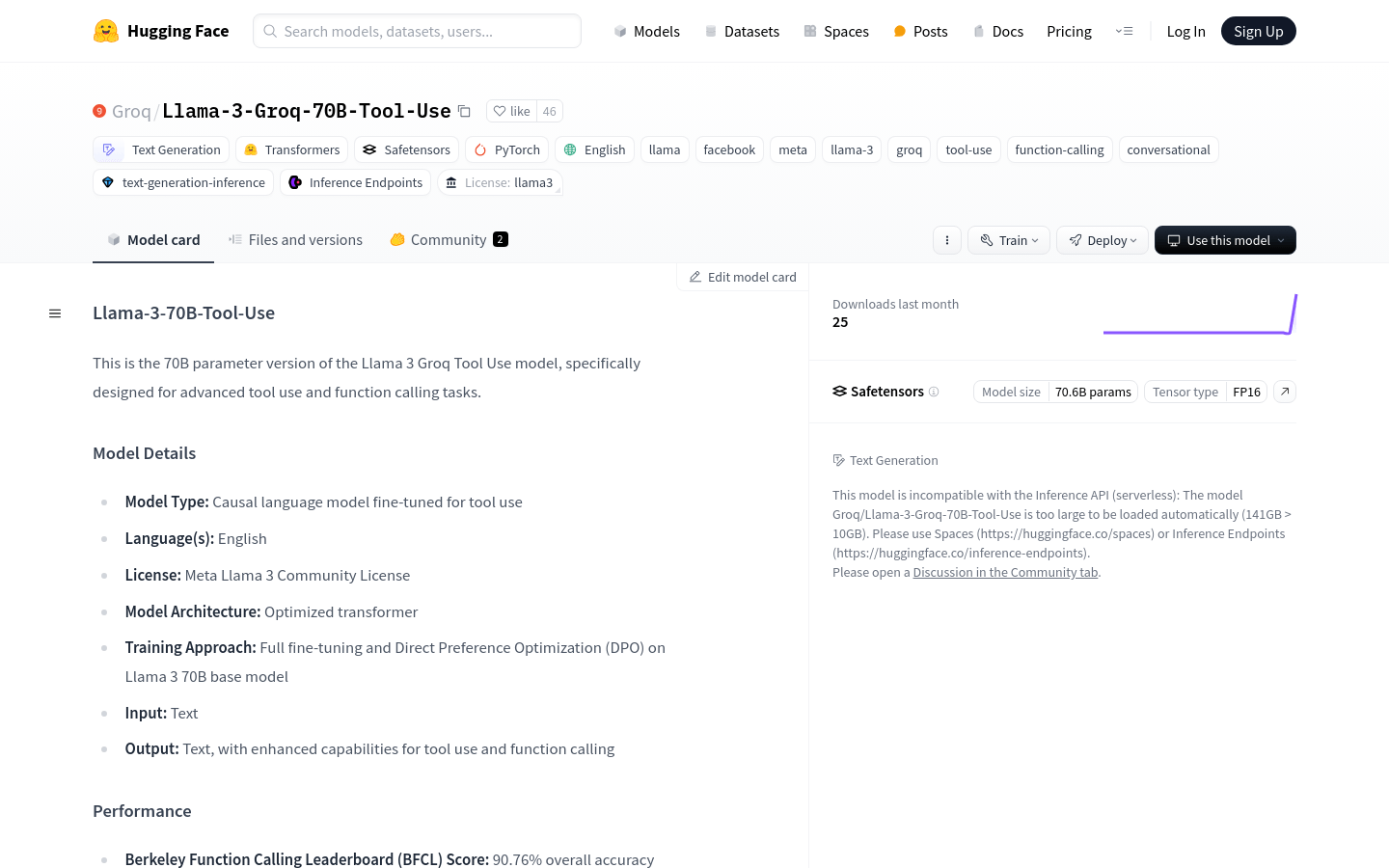

Compare LLMs

Multi-Dimensional Large Model Comparison - Find Your Perfect Match

LLM Cost Calculator

Calculate AI Model Costs Accurately - Optimize Your Budget

LLM Arena

Multi-Model Real-Time Evaluation & Quick Output Comparison

AI Model Compatibility Checker

Free PC Hardware Test for DeepSeek & Llama

AI Deployment Calculator

Enter Your Large Model Computing Requirements for Instant GPU, Memory & Server Configuration Recommendations