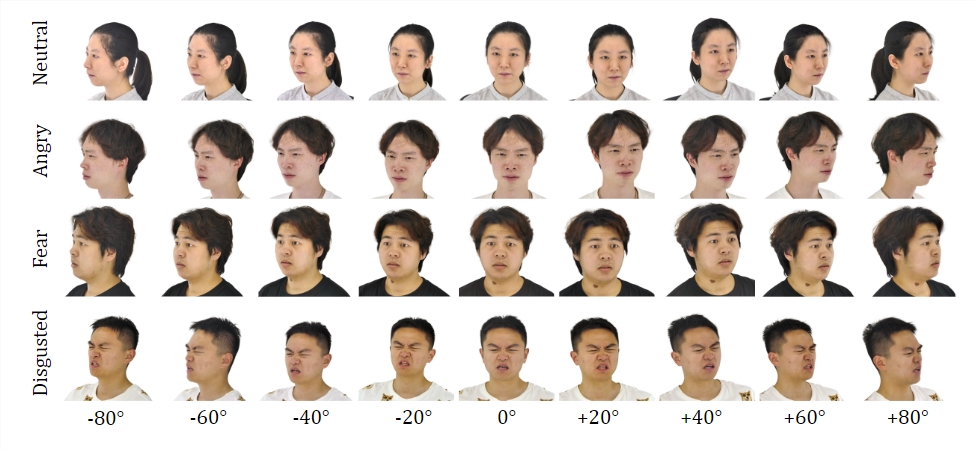

Recently, a cutting-edge technology project named EmoTalk3D has garnered significant attention in the field of artificial intelligence. This project has achieved a breakthrough in synthesizing high-fidelity, emotionally rich 3D talking avatars by introducing a dataset—the EmoTalk3D dataset—which includes calibrated multi-view videos, emotion annotations, and 3D geometry for each frame.

It is understood that the research team behind the EmoTalk3D project has addressed the shortcomings of current 3D talking avatar technology in multi-view consistency and emotional expression by proposing a novel synthesis method. This method not only enhances lip synchronization and rendering quality but also allows for controllable emotional expression in the generated talking avatars.

The research team designed a "speech to geometry to appearance" mapping framework. This framework first predicts a faithful 3D geometry sequence from audio features, then synthesizes the appearance of the 3D talking avatar represented by 4D Gaussians based on these geometries. During this process, the appearance is further decomposed into canonical and dynamic Gaussian components, which are fused through learning from multi-view videos, thereby rendering talking avatar animations in free views.

It is worth noting that the EmoTalk3D project's research team has also successfully addressed the challenges of capturing dynamic facial details, such as wrinkles and subtle expressions, which previous methods struggled with. Experimental results show that this method has a significant advantage in generating high-fidelity and emotion-controllable 3D talking avatars, with improved rendering quality and stability in lip movement generation.

Currently, the code and dataset for the EmoTalk3D project have been released on a specified HTTPS URL for global researchers and developers to reference and use. This innovative technological breakthrough will undoubtedly inject new vitality into the development of the 3D talking avatar field and is expected to be applied in various domains such as virtual reality, augmented reality, and film production in the future.