DeepSeek recently launched a series of models that have caused a stir in the global AI community. DeepSeek-V3 achieves high performance at a low cost, comparable to top closed-source models in various evaluations; DeepSeek-R1, through innovative training methods, demonstrates powerful reasoning capabilities, matching the performance of OpenAI's o1 official version, and has open-sourced its model weights, bringing new breakthroughs and insights to the AI field.

DeepSeek has also disclosed all its training techniques. R1 competes with OpenAI's o1 model, using extensive reinforcement learning techniques in the post-training phase. DeepSeek claims that R1 performs comparably to o1 on tasks such as mathematics, coding, and natural language reasoning, with API costs being less than 4% of o1's.

DeepSeek R1 is Impressive! Meta Engineers in Panic: Frantically Analyzing to Attempt Replication

Recently, an anonymous post by a Meta employee titled "Meta genai org in panic mode" went viral on the foreign anonymous workplace community teamblind. The launch of DeepSeek V3 has left Llama 4 significantly behind in benchmark tests, causing panic in Meta's generative AI team. A "lesser-known Chinese company" achieved this breakthrough with a training budget of just $5.5 million.

Meta engineers are frantically dissecting DeepSeek in an attempt to replicate its technology, while management is anxious about justifying the high costs to upper management, as the salary of each team "leader" exceeds the training costs of DeepSeek V3, with dozens of such "leaders" on payroll. The emergence of DeepSeek R1 has worsened the situation; although some information cannot yet be disclosed, it will be made public soon, potentially making matters even more unfavorable.

Translation of the anonymous post by a Meta employee (translated by DeepSeek R1):

Meta's Generative AI Department is in Emergency Mode

It all started with DeepSeek V3—it made Llama 4's benchmark scores seem outdated in an instant. Even more embarrassing, "a lesser-known Chinese company achieved such breakthroughs with a mere $5 million training budget."

The engineering team is frantically dismantling DeepSeek's architecture, trying to replicate all its technical details. This is no exaggeration; our codebase is undergoing a thorough search.

Management is struggling to justify the department's massive expenditures. When every "leader" in the generative AI department has an annual salary that exceeds the entire training cost of DeepSeek V3, and we have dozens of such "leaders," how can they explain this to upper management?

DeepSeek R1 has made the situation even more dire. While confidential information cannot be disclosed, related data will soon be made public.

What should be a lean, tech-driven team has seen its structure artificially inflated due to a surge of personnel vying for influence. The result of this power struggle? In the end, everyone becomes a loser.

Overview of the DeepSeek Series Models

DeepSeek-V3: A mixture of experts (MoE) language model with 671B parameters, activating 37B per token. It employs Multi-head Latent Attention (MLA) and DeepSeekMoE architecture, pre-trained on 14.8 trillion high-quality tokens, and surpasses some open-source models through supervised fine-tuning and reinforcement learning, matching the performance of top closed-source models like GPT-4o and Claude 3.5 Sonnet. The training cost is low, requiring only 2.788 million H800 GPU hours, approximately $5.576 million, with a stable training process.

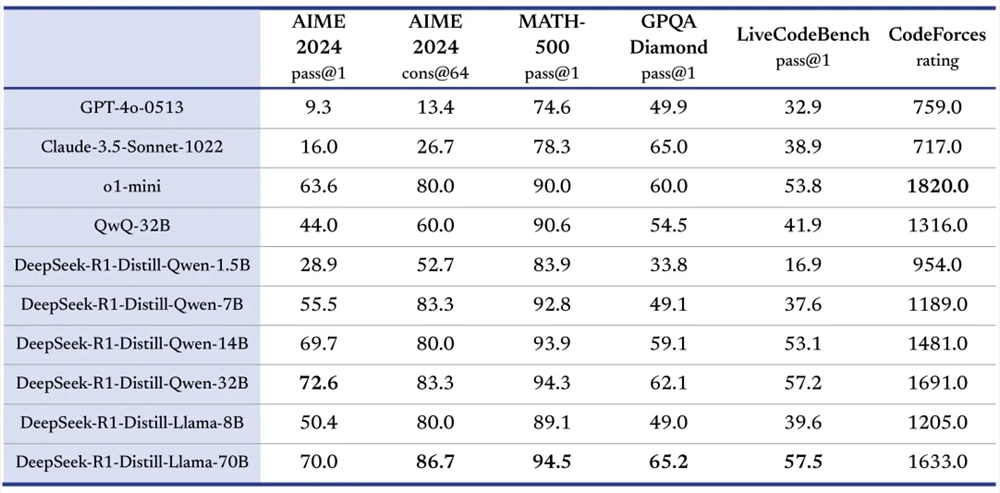

DeepSeek-R1: Includes DeepSeek-R1-Zero and DeepSeek-R1. DeepSeek-R1-Zero demonstrates self-verification and reflection capabilities through large-scale reinforcement learning training without relying on supervised fine-tuning (SFT), though it suffers from readability and language mixing issues. DeepSeek-R1 builds on DeepSeek-R1-Zero by introducing multi-stage training and cold-start data, addressing some issues, and matches OpenAI's o1 official version in tasks such as mathematics, coding, and natural language reasoning. Additionally, it has open-sourced several models of varying parameter sizes to promote the development of the open-source community.

What Makes DeepSeek So Special?

Outstanding Performance: DeepSeek-V3 and DeepSeek-R1 excel in multiple benchmark tests. For example, DeepSeek-V3 achieves excellent results in assessments like MMLU and DROP; DeepSeek-R1 has high accuracy in tests such as AIME 2024 and MATH-500, matching or even surpassing OpenAI's o1 official version in some aspects.

Innovative Training:

DeepSeek-V3 employs a load-balancing strategy without auxiliary loss and multi-token prediction objectives (MTP) to reduce performance degradation and enhance model performance; it uses FP8 training, validating its feasibility for large-scale models.

DeepSeek-R1-Zero optimizes the model purely through reinforcement learning training, relying only on simple reward and punishment signals, demonstrating that reinforcement learning can enhance model reasoning capabilities; DeepSeek-R1 builds on this by fine-tuning with cold-start data to improve model stability and readability.

Open Source Sharing: The DeepSeek series models adhere to the open-source philosophy, having open-sourced model weights such as DeepSeek-V3 and DeepSeek-R1 along with their distilled smaller models, allowing users to leverage distillation techniques to train other models using R1, promoting the exchange and innovation of AI technology.

Multi-Domain Advantages: DeepSeek-R1 exhibits strong capabilities across multiple domains; in coding, it ranks highly on platforms like Codeforces, surpassing most human competitors; in natural language processing tasks, it performs excellently in various text understanding and generation tasks.

High Cost-Performance Ratio: The API pricing for the DeepSeek series models is user-friendly. For instance, the input and output prices for the DeepSeek-V3 API are significantly lower than similar models; the DeepSeek-R1 API service pricing is also competitive, reducing costs for developers.

Applicable Scenarios for DeepSeek-R1

Natural Language Processing Tasks: Including text generation, question-answering systems, machine translation, and text summarization. For example, in a question-answering system, DeepSeek-R1 can understand questions and use reasoning abilities to provide accurate answers; in text generation tasks, it can generate high-quality text based on given themes.

Code Development: Assists developers in writing code, debugging programs, and understanding code logic. For instance, when developers encounter coding issues, DeepSeek-R1 can analyze the code and provide solutions; it can also generate code frameworks or specific code snippets based on functional descriptions.

Mathematical Problem Solving: In educational and research contexts, it solves complex mathematical problems. DeepSeek-R1 performs exceptionally well on AIME competition-related questions, aiding students in learning mathematics and researchers in tackling mathematical challenges.

Model Research and Development: Provides references and tools for AI researchers for model distillation, improving model structures, and training methods. Researchers can experiment with DeepSeek's open-source models to explore new technological directions.

Decision Support: In business and finance, it processes data and information to provide decision-making advice. For example, analyzing market data to inform marketing strategies for companies; processing financial data to assist in investment decisions.

Concise User Guide for the DeepSeek Series Models

Access the Platform: Users can log in to the DeepSeek official website (https://www.deepseek.com/) to enter the platform.

Select a Model: In the official website or app, the default conversation is powered by DeepSeek-V3; clicking to open "Deep Thinking" mode activates the DeepSeek-R1 model. If calling via API, set the corresponding model parameters in the code as needed, such as using DeepSeek-R1 by setting

model='deepseek-reasoner'.Input Task: Enter a natural language description of the task in the conversation interface, such as "write a love story," "explain the function of this code," or "solve this math equation"; when using the API, construct requests according to API specifications, passing task-related information as input parameters.

Obtain Results: After processing the task, the model returns results, allowing users to view the generated text and answers in the interface; when using the API, parse the result data from the API response for further processing.

Conclusion

The DeepSeek series models have achieved significant results in the AI field due to their outstanding performance, innovative training methods, spirit of open-source sharing, and high cost-performance advantages.

If you are interested in AI technology, feel free to like, comment, and share your thoughts on the DeepSeek series models. Also, stay tuned for DeepSeek's future developments, as we look forward to more surprises and breakthroughs in the AI field, driving continuous progress in AI technology and bringing more transformation and opportunities to various industries.