With 14 years of industry experience, we recognize the approaching wave and are moving towards it. This article aims to explore the technological and application trends in the new era of AI, combining enterprise thinking, upholding our original aspirations internally, and seeking excellence through external insights.

Technological Watershed: The Efficiency Game of Computing Power, Algorithms, and Data

With the rapid iteration of large models, humanity has embarked on the fast track to Artificial Superintelligence (ASI). The maturity of big data technology provides AI with massive production factors, the development of GPU technology has liberated AI's productivity, and algorithmic innovations have broken through the bottleneck of computing power and data for AI. With the continuous improvement of large language models like DeepSeek, GPT-o1, and Grok in model algorithms, training parameters, and computing power stacking, we have arrived at a crossroads in AI technology development: a game of efficiency involving computing power, algorithms, and data.

If we refer to Moore's Law, the development of computing power will first reach the energy efficiency ceiling. The era of relying on stacking computing power for fundamental large models will eventually end. This is not an argument against computing power; more parameters and computing power will certainly mean better model performance, but diminishing marginal returns will lead to a leveling off of investment in computing power for technological development. This is evident in the product iteration paths of current AI giants: with the launch of xAI Grok, the performance improvements brought about by computing power stacking have shown signs of fatigue, and other AI giants like OpenAI have begun to explore application areas such as AI Agents and launch intelligent agent products.

Synthetic data and private data are the next breakthroughs for data in the large model era. Although we are in the information age of data explosion, thanks to the leaps in data processing efficiency brought about by big data technology and algorithmic breakthroughs, AI development is already facing data depletion. As early as when ChatGPT was first launched, Sam Altman warned that "we are at the end of the current era of large models." The various high-quality corpora preserved in the history of the human internet have already been largely consumed by GPT-3/4. The number of parameters in large models can still continue to expand, but the corresponding amount of high-quality data is becoming increasingly scarce, so the marginal benefit of increasing the number of parameters will also gradually decrease.

Algorithms are more like a "catalyst" for AI development. They can break through the constraints of computing power and data, achieving non-linear evolution. Breakthroughs in the field of algorithms often mean breakthroughs in LLMs, such as ChatGPT under Transformer and DeepSeek under MoE. However, as model complexity increases, the room for algorithm improvement gradually shrinks. It is generally believed that algorithmic breakthroughs may require combining more interdisciplinary research results, such as neuroscience inspiring deep learning and cognitive science inspiring attention mechanisms. However, how many more "Transformer moments" there will be in the future is ultimately unpredictable.

Tongfu Shield Declaration: The development of fundamental large models is becoming stable in the efficiency game of computing power, algorithms, and data, becoming a solid infrastructure for the path to ASI; technological resources are gradually shifting towards the extraction of data value in specialized fields and the scene landing of AI intelligent agents; "application landing" will become the main theme of the next AI era development.

Application Explosion: Multi-Agent Collaboration Ushers in the Agent Era

The development of AI Agents is an evolution from "question-answering robots" to "intelligent assistants." The core of an Agent lies in "task execution," allowing AI to not be limited to giving suggestions but to execute specific tasks, such as placing an online order or executing a transaction. The evolution from simple tasks to complex tasks often requires the collaboration of different models and different intelligent agents. We define this concept of "multi-agent collaboration" as InterAgent (IA), which is a technological architecture innovation and a reconstruction of the industrial application paradigm. We believe that IA will drive AI to achieve a leapfrog development from single intelligence to group collaboration, from tool assistance to autonomous execution, becoming the core driving force behind the full-scale explosion of the Agent era.

At the technical level, Anthropic's MCP protocol enables the connection of different data sources, models, and tools, providing a standardized protocol for multi-agent collaboration (IA). MCP (Model Context Protocol) defines how applications and models exchange contextual information, making Agent development easier and simpler, and making multi-Agent collaboration more consistent and efficient. The MCP protocol ecosystem is still in its early stages of construction. Tongfu Shield, as an AI Agent trust system service provider, is also actively participating in its ecosystem construction, deploying MCP servers, developing MCP functional plugins for the community, and contributing to the expansion of the multi-agent collaboration ecosystem.

Figure 1: Tongfu Shield MCP AI Plugin Service

At the application level, with the gradual maturity of Agent frameworks such as Dify and elizaOS, AI Agents are becoming increasingly sophisticated in their role as "intelligent assistants." The emergence of Manus has sparked discussions about "general-purpose intelligent agents." On the one hand, as a general-purpose AI assistant, Manus's examples demonstrate the ability to transform the logical reasoning capabilities of large models into actual productivity, with huge commercial potential. On the other hand, given that it has not opened any public testing channels, the authenticity of Manus's technological innovation, marketing strategies, and actual value creation capabilities are also highly controversial, especially its main "general-purpose Agent" concept, which still has considerable limitations under the current trend of AI technology development.

Compared to Manus's grand general narrative, Agent application platforms like Dify have already had practical applications in many fields, thanks to the power of community co-construction. Compared to a general-purpose large model, a dedicated workflow for a specific application scenario is more vibrant. This vitality comes from the essence of business—value creation. Imagine a company creating an AI Agent for customer outreach and sales; to maximize profits, it will use the highest quality data and the best expert experience to train the Agent. The information barriers brought by private data and industry know-how will ensure that its effectiveness far surpasses that of general-purpose Agent models. Imagine an AI Agent market, bringing together excellent Agents from various fields (because the market provides sufficient incentives for Agent creators). Agents compete in the market, and only Agents with better value creation capabilities can survive. Excellent Agents can attract more users, and more users will provide more data to further promote Agent progress, forming a positive cycle.

Figure 2: Tongfu Shield On-Chain AI Plugin Platform (left), AI Agent Plugin Market (right)

Tongfu Shield Declaration: The AI application era uses intelligent agents (Agents) as the core of application and multi-agent collaboration (InterAgent, or IA) as the core of technology; assisting in building the infrastructure of intelligent agents will yield huge commercial returns, with keywords being "vertical fields," "community incentives," and "open platforms."

The Future of Models: Small Models Lead the New Era "Turing Test"

DeepMind co-founder Suleyman proposes a new era AI "Turing Test" in his book The Wave Will Come: give an AI $100,000 and see if it can learn to trade on Amazon and eventually earn $1 million. This is a very interesting concept. Compared to the technical baseline, what matters more to users is the AI Agent's ability to act, that is, its ability to create value. Commercial success is the new "Turing Test," and this test is specifically designed for Agents. Technological development is often driven by business models, and we believe that the future direction of model technology will also develop from fundamental large models to specialized small models with better performance and profitability in expert fields.

From a technical perspective, the technical framework for small models is already mature. Contrary to common belief, the technical origins of small models actually predate large language models, with their origins tracing back to expert systems in the 1960s. Their core idea is to simulate the decision-making abilities of human experts through knowledge bases and reasoning mechanisms. The MoE framework (which also directly inspired the algorithmic innovation of DeepSeek), which attracted much attention around 2010, is also a basic framework for expert models. By using a dynamic routing mechanism to allocate input to different sub-models (experts), it reduces the computational burden while maintaining performance, laying the foundation for the modular design of small models. The maturity of large models also provides conditions for improving the quality of small models. Through techniques such as knowledge distillation and model pruning, small models can significantly reduce their size while maintaining performance.

From a business model perspective, the commercial soil for small models is already complete. Small models have excellent efficiency ratios, and their deployment and inference costs are only a fraction of those of large models, but combined with expert knowledge bases, they can achieve performance far superior to large models. Data silos actually give data higher commercial value and competitive barriers. With the maturation of the commercial application of small models, high-value data can realize true data elementation, providing new business models and profit margins for enterprise development.

It is worth mentioning that the combination of distributed digital identities and small model technology can create high-value business models in the digital space. Through small models, private data in various fields can maximize their commercial value, and the digital identity of the model becomes the key to data element authorization. Currently, distributed digital identity technology is relatively mature. How to enable each small model and each AI Agent to have a trustworthy identity, or even an account system, in the digital space is a key issue for exploring new applications of AI Agents.

In some specific fields, small models have unparalleled competitive advantages. For example, in data-sensitive industries such as energy, military, and medical, data needs to be processed locally or even requires edge inference, which large models cannot achieve. Taking power grid operations as an example, using AI Agents with expert field small models can achieve more intelligent and humane risk control intervention in business risk control scenarios; in marketing scenarios, it can achieve automated market lead collection, activity operations, and precise marketing acquisition; in distributed photovoltaic and edge device management scenarios, it can significantly improve dispatching efficiency and reduce operating costs. In industries such as financial risk control, law, and education and training, expert experience is valuable and private, and local knowledge bases combined with custom workflows can effectively protect this content from being reverse-engineered by users.

Figure 3: Tongfu Shield Power Grid Business Security Multi-AI Agent Collaboration Matrix

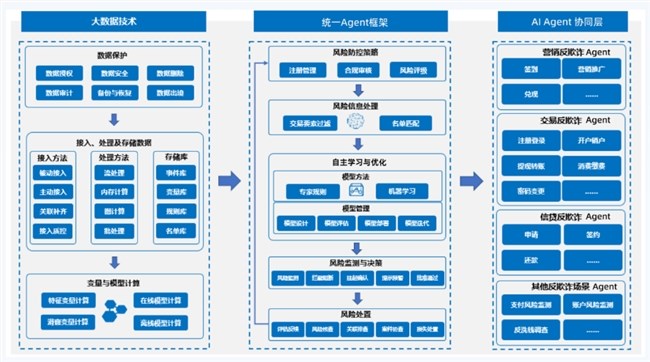

Figure 4: Tongfu Shield Banking AI Agent Intelligent Risk Control Platform

Tongfu Shield Declaration: Commercial success is the new era "Turing Test," and small models are the best path for AI Agents to break through the new era "Turing Test." Distributed business and distributed intelligence will also shine brightly due to the development of small models.