Meta and researchers from the University of Waterloo have collaborated to develop MoCha, an AI system capable of generating full-body character animations with synchronized speech and natural movements. Unlike previous models focusing solely on facial animation, MoCha renders full-body motion from multiple camera angles, including lip-sync, gestures, and interactions between multiple characters.

Improving Lip-Sync Accuracy

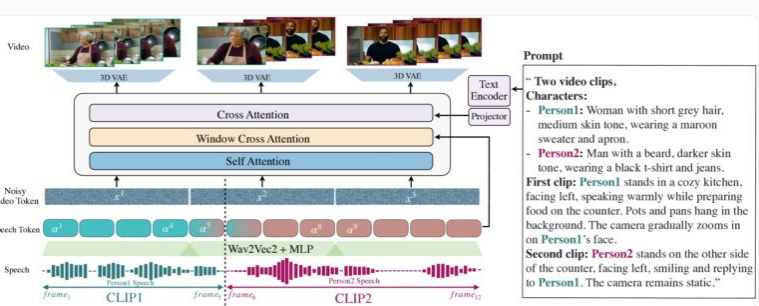

MoCha's demonstration highlights the synchronized generation of upper body movements and gestures in close-up and medium shots. Its unique "audio-visual window attention" mechanism successfully addresses two long-standing challenges in AI video generation: maintaining full audio resolution during video compression and avoiding lip-sync mismatches during parallel video generation.

MoCha innovates by limiting each frame's access to a specific audio data window, mimicking human speech production – lip movements are closely tied to immediate sounds, while body language reflects broader textual patterns. By adding markers before and after each frame's audio, MoCha achieves smoother transitions and more precise lip synchronization.

MoCha generates realistic videos with facial expressions, gestures, and lip movements based on text descriptions.

To build the system, the research team used 300 hours of carefully curated video content and combined it with text-based video sequences to expand the possibilities of expression and interaction. MoCha excels particularly in multi-character scenes; users define characters once and easily recall them across different scenes using labels (e.g., "Character 1" or "Character 2") without repeated descriptions.

Managing Multiple Characters

In tests across 150 different scenarios, MoCha outperformed comparable systems in both lip-sync accuracy and the naturalness of its movements. Independent evaluators consistently rated the generated videos as highly realistic, exhibiting unprecedented precision and naturalness.

Researchers developed a prompt template allowing users to reference specific characters without repeated descriptions.

MoCha's development shows significant potential across various applications, particularly in digital assistants, virtual avatars, advertising, and educational content. While Meta hasn't revealed whether the system will be open-sourced or if it remains a research prototype, its introduction undoubtedly marks a new chapter in AI-driven video generation.

MoCha's release is particularly noteworthy in the increasingly competitive landscape of AI video technology. Meta recently launched the MovieGen system, while ByteDance, the parent company of TikTok, is developing its own AI animation tools, including INFP, OmniHuman-1, and Goku, highlighting the active involvement of social media companies in this field.