Researchers from Meta and the University of Waterloo recently unveiled MoCha, a groundbreaking AI system capable of generating full-body animated characters with synchronized speech and natural movements from simple text descriptions. This innovative technology promises to significantly enhance content creation efficiency and expressiveness, showcasing immense potential across various fields.

Revolutionizing Animation: Full-Body Animation with Precise Lip Sync

Unlike previous AI models that primarily focused on facial expressions, MoCha's unique strength lies in its ability to render natural full-body movements. Whether shot from close-up or medium shots, the system generates nuanced actions including lip synchronization, gestures, and multi-character interactions based on the text input. Early demonstrations, primarily focusing on the upper body, showcased the system's ability to precisely match character lip movements to dialogue, with body language naturally aligning with the textual meaning.

To achieve more accurate lip synchronization, the research team innovatively introduced a "speech-video window attention" mechanism. This mechanism effectively addresses two long-standing challenges in AI video generation: information compression during video processing while maintaining full audio resolution, and lip-sync misalignment issues common in parallel video generation. The core principle involves limiting each frame's access to audio data within a specific window. This mimics human speech processing – mouth movements rely on immediate sound, while body language follows broader textual patterns. By adding markers before and after each audio frame, MoCha generates smoother transitions and more accurate lip sync.

Effortless Multi-Character Management with a Streamlined Prompt System

For scenes involving multiple characters, the MoCha team developed a simple and efficient prompt system. Users need only define character information once and then reference them in different scenes using simple tags (e.g., 'Person1', 'Person2'). This avoids the tedious process of repeatedly describing characters, making multi-character animation creation much easier.

Superior Performance, Outperforming Competitors

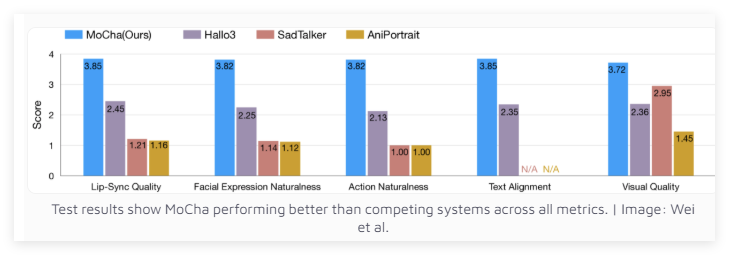

Tested across 150 diverse scenarios, MoCha outperforms similar systems in both lip synchronization and natural movement quality. Independent evaluators highly praised the realism of MoCha's generated videos. Test results demonstrate MoCha's superior performance across various metrics.

Meta's research team believes MoCha holds significant potential in areas such as digital assistants, virtual avatars, advertising, and educational content. However, Meta hasn't disclosed whether the system will be open-sourced or remain a research prototype. Notably, MoCha's development comes at a crucial time when major social media companies are vying to develop AI-driven video technologies.

Previously, Meta launched MovieGen, while ByteDance, TikTok's parent company, is actively developing its own AI animation systems, including INFP, OmniHuman-1, and Goku. This AI video technology race will undoubtedly accelerate the advancement and widespread adoption of related technologies.

Project Link: https://top.aibase.com/tool/mocha