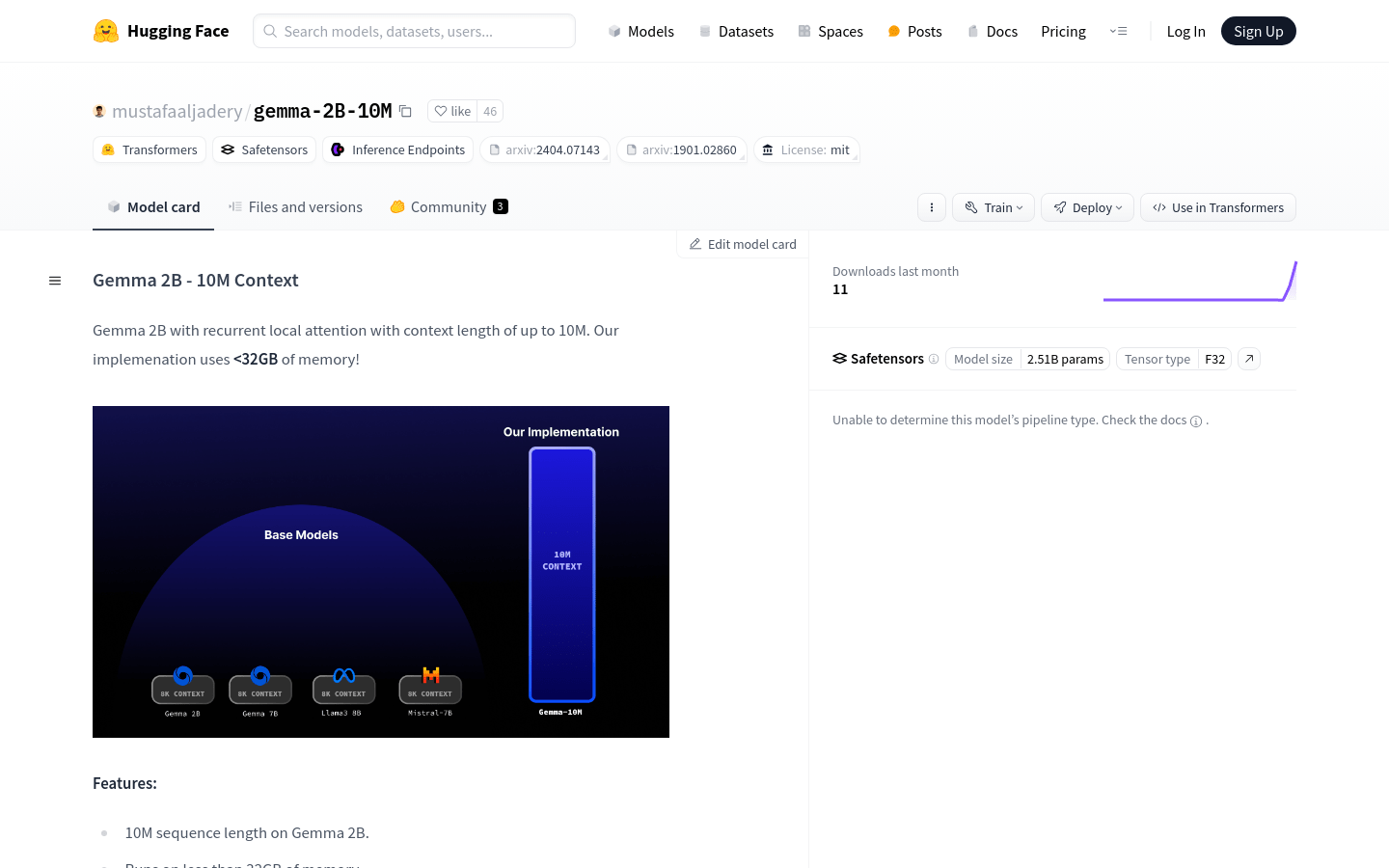

Gemma-2B-10M

The Gemma 2B model supports 10M sequence length, optimizes memory usage, and is suitable for large-scale language model applications.

Gemma-2B-10M Visit Over Time

Monthly Visits

27175375

Bounce Rate

44.30%

Page per Visit

5.8

Visit Duration

00:04:57