Welcome to the 【AI Daily】column! This is your daily guide to exploring the world of artificial intelligence. Every day, we present you with the hottest AI news, focusing on developers and helping you understand technology trends and innovative AI product applications.

Discover new AI products Learn More: https://top.aibase.com/

1. Alibaba Launches AI Flagship App "New Quark," Fully Upgraded to "AI Super Box"

On March 13th, Alibaba launched its newly upgraded AI flagship application—New Quark. Based on Alibaba's Tongyi advanced reasoning and multimodal large model, this app integrates various AI functions to provide users with a seamless intelligent experience. New Quark can not only conduct intelligent conversations but also possesses deep thinking and execution capabilities, meeting user needs across multiple scenarios. Through this innovation, Alibaba further solidifies its leading position in the AI application field and lays the foundation for future technological development.

【AiBase Summary:】

🤖 New Quark integrates multiple functions, including AI dialogue, deep thinking, and in-depth search, providing one-stop service.

📊 Through an intelligent central system, New Quark automatically identifies user instructions and executes them in depth.

🌐 Alibaba plans to quickly integrate the latest achievements of the Tongyi series of models into New Quark to enhance its functionality.

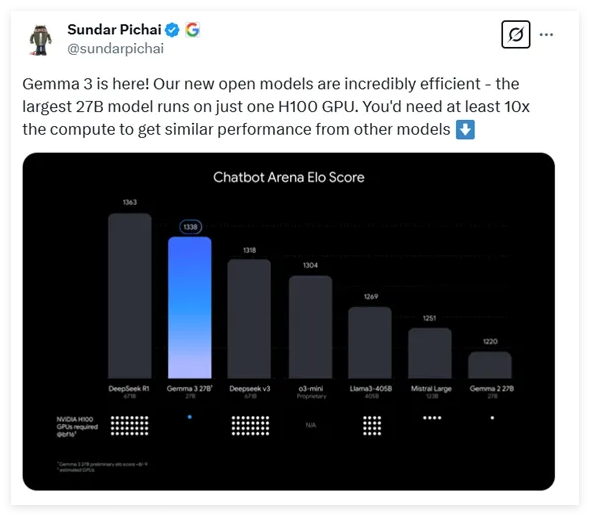

2. Google Open-Sources Next-Generation Multimodal Model Gemma-3: Superior Performance, 10x Lower Cost

Google's newly released multimodal large model, Gemma-3, has attracted widespread attention for its low cost and high performance. The model supports various parameter scales, up to 27 billion parameters, and only requires a single H100 GPU for efficient inference, significantly reducing computing power requirements. Gemma-3 excels in dialogue model comparisons, supports long-text processing and multimodal data, demonstrating powerful language processing capabilities and innovative architectural design. It is one of the highest-performing models with the lowest computational requirements currently available.

【AiBase Summary:】

🔍 Gemma-3 is Google's latest open-sourced multimodal large model, with parameter ranges from 1 billion to 27 billion, and computational requirements reduced by 10 times.

💡 The model uses innovative architectural design to effectively handle long contexts and multimodal data, supporting simultaneous processing of text and images.

🌐 Gemma-3 supports 140 languages and, after training optimization, excels in various tasks, showcasing its powerful comprehensive capabilities.

Details: https://huggingface.co/collections/google/gemma-3-release-67c6c6f89c4f76621268bb6d

3. Baidu's Wenxin Quick Code Releases Comate Zulu Version and Officially Opens Public Beta Testing

Baidu's Wenxin Quick Code has released the Comate Zulu version, marking a significant breakthrough in the field of intelligent programming. This version, by combining the powerful capabilities of the Wenxin large model and extensive programming big data, provides developers with a more efficient programming experience. Users can interact with the system using natural language to quickly build projects and understand code logic, significantly improving development efficiency. The public beta test will run until March 28th, and developers can experience this innovative feature in mainstream IDEs.

【AiBase Summary:】

🛠️ Achieve requirements through natural language; automatically build projects without writing code; supports spoken communication and image display.

📊 Quickly understand the business logic of the code base; provides architecture diagram organization and intelligent inspiration, helping developers quickly get started with new projects.

⚙️ Automatically builds the development environment; supports automatic dependency installation and service auto-start; achieves end-to-end generation from requirements to code.

Details: https://comate.baidu.com

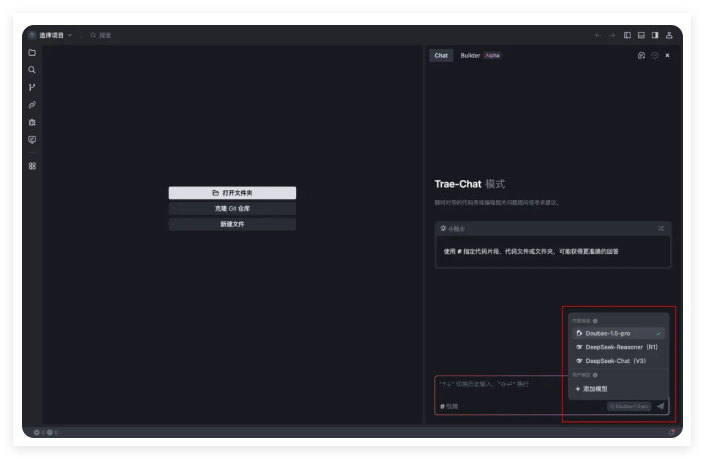

4. ByteDance's Trae Integrates with SiliconCloud, Supporting Multiple DeepSeek Model APIs

The SiliconCloud platform has officially integrated with ByteDance's AI IDE, Trae, enhancing the developer's programming experience. Users can easily access multiple coding models, including DeepSeek-R1 and V3, to meet diverse needs. The platform also provides free API services to help developers achieve more efficient development processes. In the future, SiliconCloud will continue to expand model types and collaborative applications, aiming to provide developers with more stable services.

【AiBase Summary:】

🔧 Trae integrates with SiliconCloud, providing multiple efficient coding models to enhance the programming experience.

🔑 Users can easily add models and obtain API keys.

🚀 SiliconCloud is committed to providing stable API services and will expand model types in the future.

5. Explosive Update! Google AI Studio Evolves Again: YouTube Video Understanding in Seconds, AI Art Maintains Character Consistency

The latest upgrade to Google AI Studio has caused a sensation in the tech world. Users can now directly understand video content through YouTube links without downloading or uploading. The Gemini 2.0 Flash Experimental model not only excels in video parsing but also demonstrates amazing consistency in image generation. The launch of these features marks a significant transformation in Google's AI tool field and may have a profound impact on AI tools that rely on simple encapsulation technology.

【AiBase Summary:】

🎥 Google AI Studio now supports direct parsing of YouTube video links, allowing users to quickly understand video content.

🖼️ Gemini 2.0 Flash exp excels in image generation, maintaining character consistency across multiple images.

⚡ The update marks Google AI Studio's transition from basic models to application-level tools, impacting the existing AI tool ecosystem.

Details: https://ai.google.dev/gemini-api/docs/vision?lang=python&hl=zh-cn#youtube

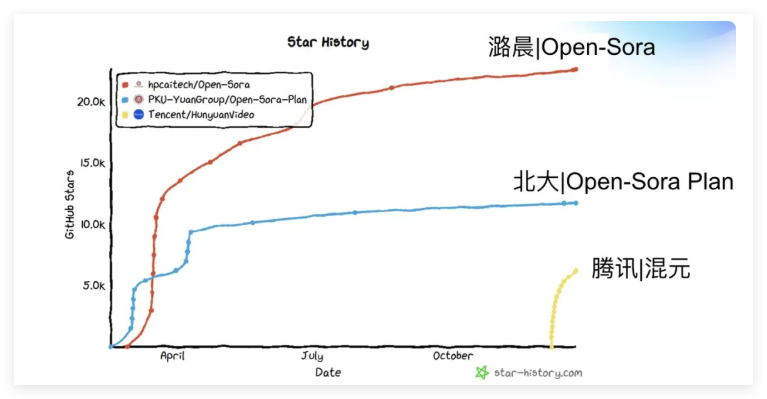

6. Challenging Sora? Lu Cheng Technology Open-Sources Video Large Model Open-Sora 2.0, Reducing Costs and Increasing Speed

Lu Cheng Technology's Open-Sora 2.0, with its training cost of only $200,000 and powerful performance with 11 billion parameters, successfully challenges industry benchmarks such as OpenAI Sora. The model performs exceptionally well in multiple evaluations, particularly in VBench, where the performance gap with OpenAI Sora is narrowed to 0.69%. The open-source nature and efficient training strategy of Open-Sora 2.0 bring new opportunities to the video generation field, lowering the barrier to entry for high-quality video generation and promoting the development of the open-source ecosystem.

【AiBase Summary:】

💰 Low Cost: Open-Sora 2.0 requires only $200,000 in training costs, significantly lower than industry standards.

📈 Strong Performance: With 11 billion parameters, its performance is close to OpenAI Sora, performing excellently in VBench evaluations.

🌐 Open Source: The entire training code is open-source, promoting the common development of video generation technology.

Details: https://github.com/hpcaitech/Open-Sora

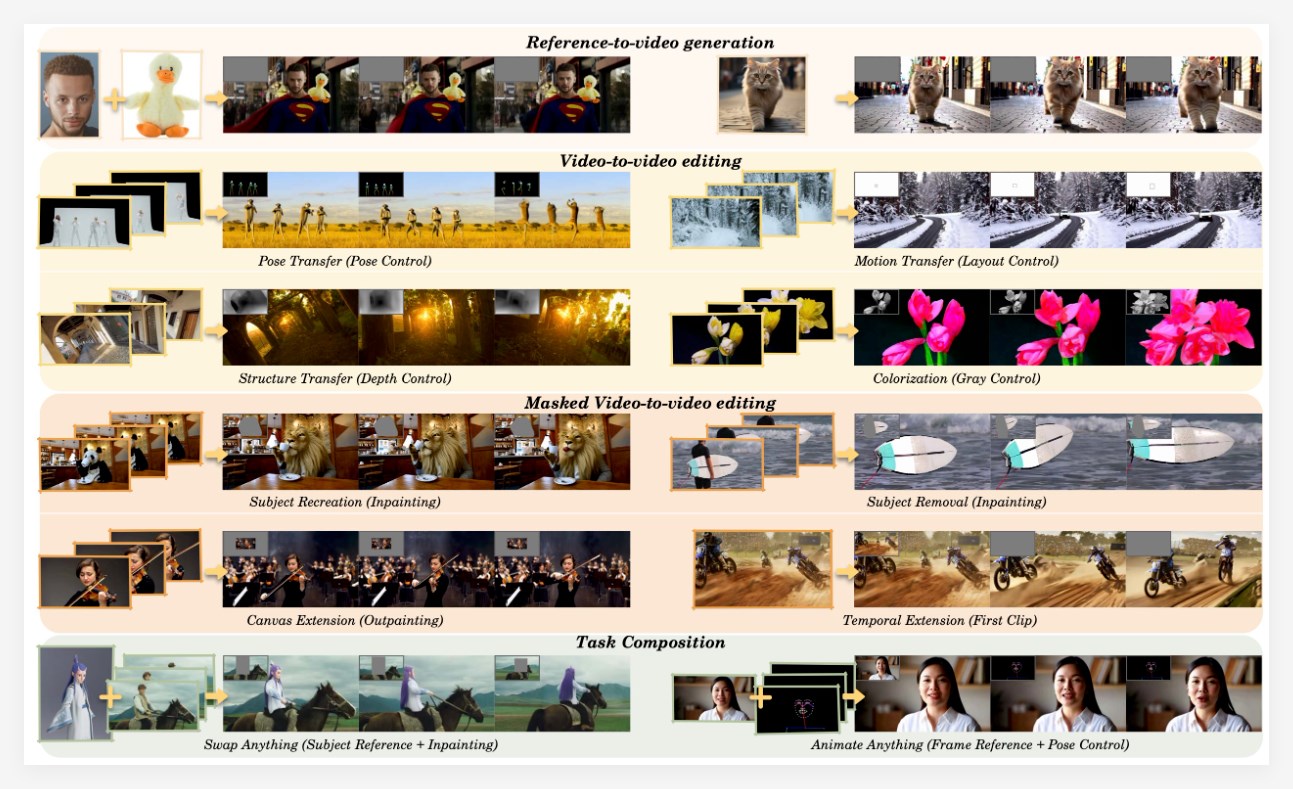

7. Alibaba Tongyi's New Video Generation and Editing Model VACE Enables Control of Motion Trajectories, Subject Replacement, etc.

Alibaba Tongyi Wan team has launched the new VACE model, aiming to lower the barrier to video production and improve creative efficiency. VACE's conditional video generation feature allows users to quickly realize their creativity through text descriptions, as if they had a dream film crew. In addition, VACE has several powerful editing functions, such as object motion trajectory control, video subject replacement, style transfer, and intelligent video frame expansion. Even old videos can be restored to their former glory through VACE's re-rendering technology, greatly enriching the possibilities of video creation.

【AiBase Summary:】

🎬 The VACE model quickly generates videos from text descriptions, improving creative efficiency.

🔄 Supports object motion trajectory control and video subject replacement, offering flexibility.

🖼️ Features intelligent video frame expansion and style transfer functions, enriching creative expression.

Details: https://arxiv.org/pdf/2503.07598

8. Ideal Auto's AI Assistant, Ideal Student Web Version Launched: Integrates Full-Blooded DeepSeek R1

Ideal Auto has officially launched the web version of its AI assistant, Ideal Student, marking a further expansion in its intelligent service field. This assistant integrates the full-blooded DeepSeek R1V3671B, providing powerful question-answering capabilities and cross-scene service coordination. Users can switch between different models, supporting long-text input and image question-answering functions, improving the interaction experience. The Ideal Student's new image interaction function makes user interaction more intuitive. In the future, Ideal Auto will continue to explore more innovative service models to meet the ever-changing needs of users.

【AiBase Summary:】

💻 The Ideal Student web version is now online, allowing users to use it on their computers and expanding the intelligent service ecosystem.

🔍 Integrates the full-blooded DeepSeek R1V3671B, supporting model switching and deep thinking functions, improving question-answering capabilities.

🖼️ Supports thousand-character long-text input and image question-answering, providing a stronger user interaction experience.

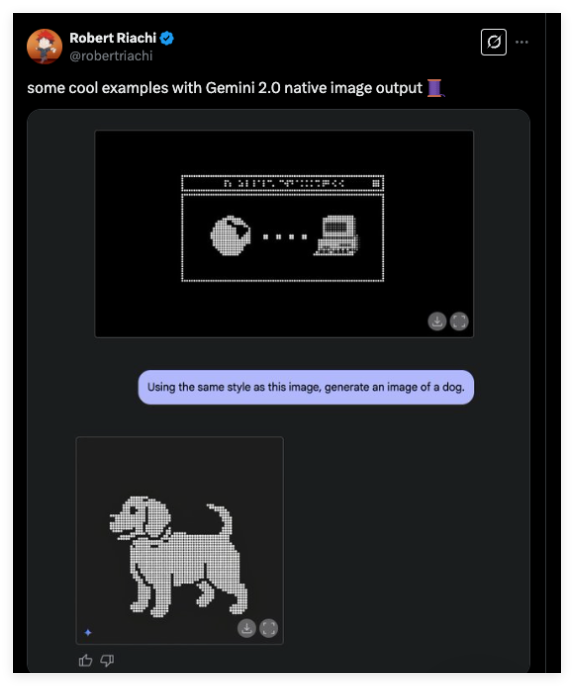

9. Google Gemini 2.0 Flash Releases Native Multimodal Image Generation Capabilities: Supports Multi-Round Conversational Real-Time Editing

Google's newly launched Gemini 2.0 Flash introduces native image generation technology in the AI image generation field, significantly improving generation efficiency and accuracy. Unlike previous methods that rely on large language models, Gemini 2.0 Flash achieves direct integration of image generation and text understanding, making the creation process smoother. Its multi-round conversational editing capabilities and strong knowledge reserves allow users to adjust the generated images in real time, greatly meeting the creative needs of individuals and businesses.

【AiBase Summary:】

🎨 Native Image Generation: Gemini 2.0 Flash directly integrates image generation capabilities, avoiding information distortion and improving generation efficiency and accuracy.

🖌️ Real-time Editing: Supports multi-round conversational editing; users can use natural language to suggest modifications, and the AI can respond and adjust the image immediately.

📈 Enterprise Applications: Provides powerful tools for marketing teams and developers to quickly generate content, reducing design costs and improving work efficiency.

10. Remade AI Open-Sources 8 Wan2.1 Effect LoRAs, Sparking a New Wave in AI Video Creation

Remade AI has launched 8 open-source effect LoRAs based on the Wan2.1 model on the Hugging Face platform, attracting widespread attention from the tech community. These effect modules can not only transform static images into dynamic videos but also bring new creative possibilities to AI video generation. Through social media, users have expressed amazement at the effects, believing that they will promote the democratization of AI technology and accelerate the popularization of video creation.

【AiBase Summary:】

🎨 8 new effect LoRAs, including squeeze, cakeification, and expansion, enrich the possibilities of AI video creation.

💻 The Wan2.1 model, with its efficiency and multi-functionality, has become a top choice in the video generation field.

🌍 Remade AI invites global users to submit customized requests and promises to continue open-sourcing more effect modules.

11. Revolutionary Breakthrough in AI Lip-Syncing: Captions' New Model Mirage Creates Ultra-Realistic UGC Videos

Captions' new AI model, Mirage, marks a significant breakthrough in video generation technology. This model can generate UGC-style videos in real time, with facial expressions and body language realism surpassing previous technologies. It simplifies the video production process, significantly reducing costs and time, especially for advertising and content creators. Mirage supports 29 languages, facilitating global market promotion. Future improvements will include model optimization and increased customization options.

【AiBase Summary:】

🚀 The Mirage model can generate UGC videos in real time without relying on pre-recorded footage or traditional tools.

🎭 The generated characters' facial expressions and body language are highly realistic, making it difficult to distinguish them from real people.

🌍 Supports video generation in 29 languages, greatly simplifying the video production process and reducing costs and time.

Details: https://www.captions.ai/mirage

12. Google Launches Robot Control Model Gemini Robotics, Enabling Robots to Think and Act Like Humans

Google's Gemini Robotics is a revolutionary robot control model designed to infuse robots with the intelligence of artificial intelligence, enabling them to act more intelligently in the physical world. Based on the Gemini 2.0 model, Gemini Robotics possesses powerful multimodal understanding capabilities, capable of understanding text, images, audio, and video, and has excellent generalization capabilities, allowing it to quickly adapt to new environments and instructions. Furthermore, it incorporates comprehensive safety considerations to ensure the safety of robots during task execution.