Dear "Surfing Experts", do you remember those years when we chased after meme templates? From "Old Man on the Subway Looking at His Phone" to "Golden Museum Director Panda Head", they not only brought us laughter but also became unique symbols of internet culture. Today, with short videos taking the world by storm, meme templates have also evolved, transforming from static images to dynamic videos that are flooding major platforms.

However, creating a high-quality meme video is not an easy task. Firstly, the essence of meme templates lies in their exaggerated expressions and large movements, which poses significant challenges to video generation technology. Secondly, many existing methods require parameter optimization of the entire model, which is not only time-consuming and labor-intensive but may also reduce the model's generalization ability, making it difficult to accommodate other derivative models—a true case of "pulling one hair affects the whole body".

So, is there a way for us to easily create lively, interesting, and high-fidelity meme videos? The answer is: Of course! HelloMeme is here to save you!

HelloMeme is like a tool that adds "plugins" to large models, enabling them to learn the "new skill" of creating meme videos without altering the original model. Its secret weapon is an optimized attention mechanism related to two-dimensional feature maps, which enhances the performance of the adapter. In simple terms, it's like giving the model a pair of "perspective glasses" to capture the details of expressions and movements more accurately.

The working principle of HelloMeme is also quite interesting. It consists of three components: HMReferenceNet, HMControlNet, and HMDenoisingNet.

HMReferenceNet acts like a seasoned expert who has "seen countless images". It can extract high-fidelity features from reference images. It's like providing the model with a "meme creation guide" to understand what kind of expressions are truly "ridiculous".

HMControlNet, on the other hand, functions as a "motion capture master", extracting head poses and facial expression information. This is equivalent to equipping the model with a "motion capture system" that allows it to precisely capture every subtle change in expression.

HMDenoisingNet serves as the "video editor", responsible for integrating the information provided by the first two components to generate the final meme video. It's like an experienced editor who can perfectly blend various materials together to create a hilarious video masterpiece.

To ensure that these three components work better together, HelloMeme also employs a magical technique called "spatial weaving attention mechanism". This mechanism weaves together different feature information like knitting a sweater, thus preserving the structural information in the two-dimensional feature map. As a result, the model doesn't have to relearn this foundational knowledge and can focus more on the "artistic creation" of meme production.

The most impressive aspect of HelloMeme is that it retains the original parameters of the SD1.5UNet model during the training process, only optimizing the parameters of the inserted adapter. ** This is like giving the model a "patch" rather than performing a "major surgery".** The benefit of this approach is that it retains the powerful capabilities of the original model while endowing it with new abilities, achieving a win-win situation.

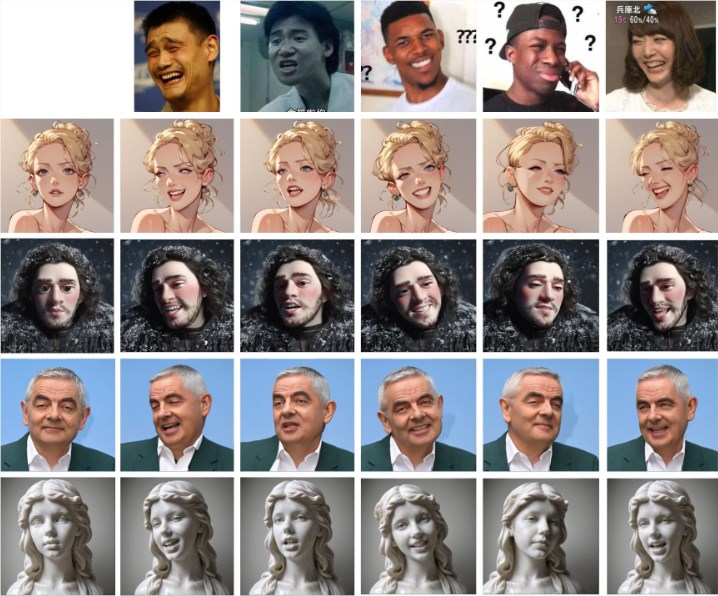

HelloMeme has achieved fantastic results in meme video generation tasks. The videos it produces not only feature lively expressions and smooth movements but also have high clarity, rivaling professional production levels. More importantly, HelloMeme is well compatible with SD1.5 derivative models, meaning we can leverage the advantages of other models to further enhance the quality of meme videos.

Of course, there is still plenty of room for improvement in HelloMeme. For example, the generated videos still slightly lag behind some GAN-based methods in terms of frame continuity, and the expressive capability of styles needs enhancement. However, the research team behind HelloMeme has stated that they will continue to work on improving the model, making it even more powerful and "ridiculous".

We believe that in the near future, HelloMeme will become our best tool for creating meme videos, allowing us to unleash our "ridiculous" creativity and dominate the short video era with memes!

Project address: https://songkey.github.io/hellomeme/